Div Garg, CEO of MultiOn

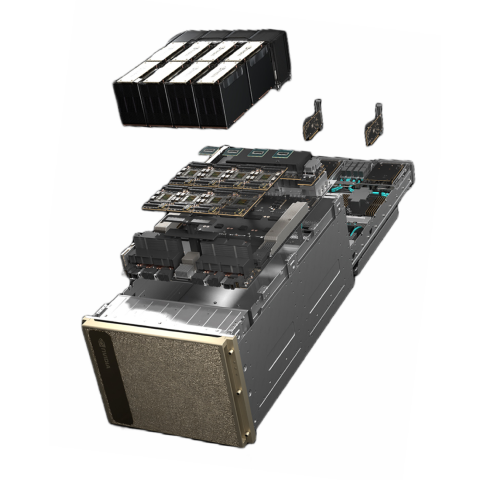

"Accessing high-demand resources like NVIDIA H100 GPUs was difficult for an AI startup. Partnering with GreenNode has helped us overcome this challenge as we have instant access to H100 GPUs and be able to scale our H100 GPU infrastructure quickly.

This enables us to continually fine-tune our model and thus enhancing our AI agent product to meet the market demand and adoption. Their flexible payment terms is also crucial in keeping our cost and cashflow manageable.

Last but not least, we appreciate their speedy technical support when we want to upgrade the Internet bandwidth or need to have a shared filesystem for our dataset."